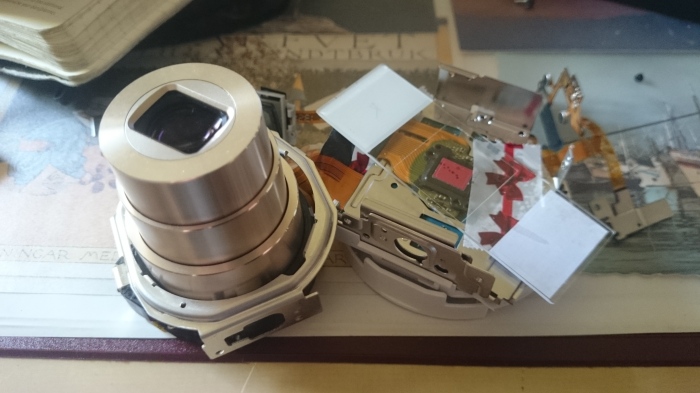

Realizing the Microscope using a Smartphones camera makes removing all lenses necessary, thus one needs to destroy a usually working phone.

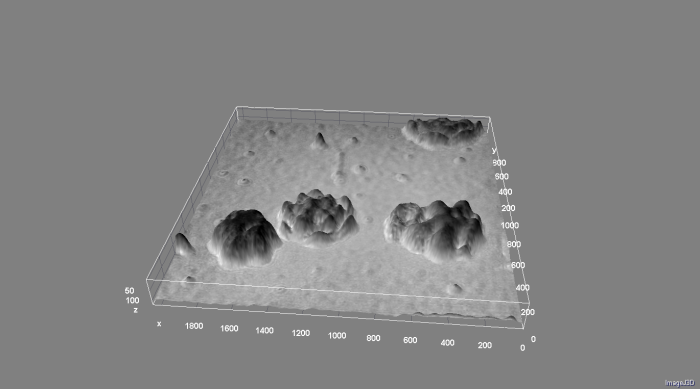

Even though it’s open-source it’s really expensive. So why not finding a solution which still uses a smartphone, but only for processing raw data which is coming from a 3rd party device. Looking for a good solution having in mind, that the pixelsize should be as small as possible and accessible by a smartphone I’ve found the Sony QX 10 digital still camera. „Holoscop V3 – Sony Wifi-Cam as lensless Microscope (R.I.P)“ weiterlesen